AI INDUSTRY INTELLIGENCE · SIGNAL & FLOW

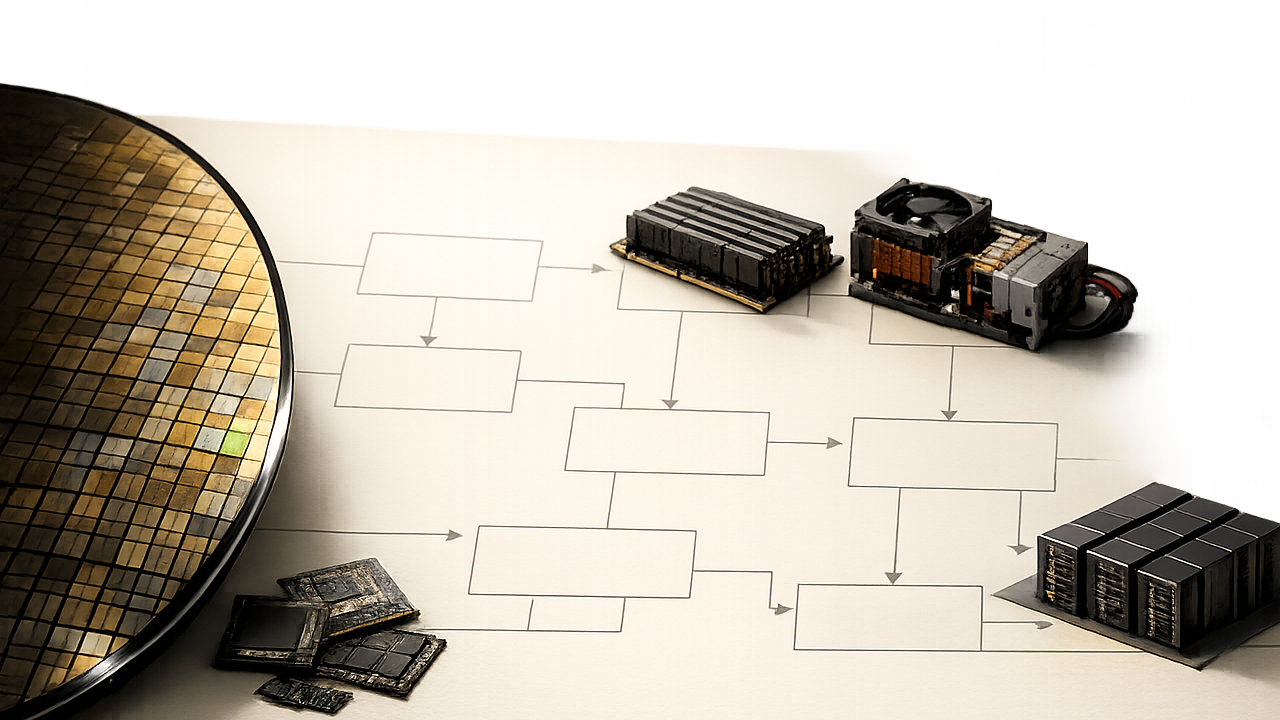

After Nvidia: The Answer Is Outside the GPU Bottleneck

The answer is not “find one company that replaces Nvidia.” That framing is too narrow.

A better answer is this:

The next AI winners are more likely to come from companies solving bottlenecks outside the GPU, not from a single Nvidia replacement.

The bottleneck sequence I am watching looks like this:

- HBM and memory: inference and agentic AI need longer context, KV cache, and persistent state.

- Packaging and foundry: compute and memory must be packed more tightly into one system.

- Networking and interconnects: more GPUs only matter if they can be connected reliably.

- Power, cooling, and racks: chips do not produce tokens without electricity and heat removal.

- Data-center land, grid access, and permitting: the slowest physical layer can cap the whole growth curve.

So the answer is not simply “AMD after Nvidia?” AMD can be part of the discussion, but the question is too small. AI infrastructure is no longer just a GPU market. It is becoming a token factory. And once you think in factories, bottlenecks keep moving from one layer to another.

My answer: keep the core, look for expansion rails, verify the satellites

I would split the opportunity into three groups.

1. Core engines

These are the companies where earnings power, customer demand, ecosystem position, and technical advantage are already visible.

- Nvidia: the center of the AI factory, expanding from GPUs into networking, software, and system-level infrastructure.

- TSMC: the manufacturing layer behind advanced AI chips, whether the chip is a GPU or a custom ASIC.

- SK hynix: one of the clearest beneficiaries of the HBM memory bottleneck.

My answer here is straightforward. The core engine thesis does not look broken yet. But quality is not the same as entry price. If expectations are already high, the right action may be holding or staged buying, not chasing.

2. Expansion rails

These are the areas that benefit as the bottleneck moves outward.

- Networking and interconnects: Arista, Broadcom, Marvell-type exposure

- Power and cooling: Vertiv, power equipment, UPS, high-density racks, liquid cooling

- Packaging, substrates, and test: CoWoS, advanced packaging, high-performance substrates, inspection tools

- Optics and CPO: beneficiaries of rising data movement inside AI clusters

The answer here is not “buy everything.” Expansion rails belong in the waiting bucket until numbers confirm the story. Orders, margins, and free cash flow conversion matter. “Second-order beneficiary” is a useful phrase, but it can also be dangerous. Narratives often move faster than earnings.

3. Option satellites

These have upside, but either the numbers are weak or the price has already moved ahead of the evidence.

- Early CPO and optical component themes

- Some high-multiple cooling and power equipment names

- Peripheral data-center real estate and power infrastructure plays

- Smaller next-generation memory or storage names

My answer is clear. Option satellites are watchlist names, not default buys. They can work, but they need stricter verification. A bullish YouTube clip or an attractive theme is not enough.

My answer on AI CapEx: growth signal and liquidity burden

The most important point from this batch of material is that AI CapEx cuts both ways.

It is a growth signal. If hyperscalers keep spending, that supports demand visibility for GPUs, HBM, foundry, networking, power, cooling, and data-center equipment. That is Growth+.

But it is also a liquidity burden. Bigger CapEx means more depreciation, higher power costs, more debt issuance, and pressure on free cash flow. If rates or credit spreads move higher, the same CapEx can be re-priced from “future growth investment” to “cash-flow pressure.” That is Liquidity-.

So my answer is:

AI CapEx is fuel for the bull case, but it is also the weak spot if the market gets overheated.

The theme still deserves attention. But stocks that have already priced in too much can correct even when the long-term story remains strong.

What to watch now

I would track these six signals:

- HBM ASP, yield, and long-term supply agreements

- Whether ASIC, networking, and packaging demand show up in reported numbers

- Whether power contracts and grid connection delays remain bottlenecks

- Whether hyperscaler CapEx converts into RPO, revenue, and free cash flow

- RSI and moving-average distance for QQQ, SMH, and semiconductor leaders

- Whether rates, oil, and the dollar start pressuring high-multiple AI stocks

If these signals stay healthy, the AI infrastructure thesis remains intact. If CapEx keeps rising but free cash flow and order conversion weaken, the market’s language can shift from “AI growth” to “AI overinvestment.”

Investment conclusion

My conclusion is simple.

- The AI trade does not look finished yet. The bottleneck is spreading from GPUs into memory, packaging, networking, power, and cooling.

- The better answer is core engines plus selected expansion rails. Do not abandon Nvidia, TSMC, and HBM. Look for the companies that turn the next bottleneck into pricing power.

- Classification comes before chasing. Separate core holdings, waiting-list candidates, and watchlist options. Then act only when price and timing make sense.

Finding what comes after Nvidia is not about guessing the company that replaces Nvidia.

It is about finding the next constraint in the AI factory and identifying who can turn that constraint into cash flow.

Investor note

This article is investment research commentary, not a recommendation to buy or sell any security. Figures and direct quotes from auto-captioned material should be rechecked against official sources.